Custom Ceramic Resistors

Ceramic composition resistors are offered in multiple material types in ranges from 0.5 watts to 1000 watts in a single component. Ceramic resistors are compatible with a wide array of end products and are chemically inert and thermally stable. This assures all users that this product line is safe and durable. You can find ceramic resistors in a wide array of applications including rail charging stations, switchgear, and motor controls.

Ohmite's entire line of ceramic resistors can be customized to your application's needs, including options like geometry and coating.

Ceramic resistors series offer the following points of customization:

- Specialized material compositions for high voltage and high energy

- Multiple termination and mounting options

- Coating options for dielectric and oil resistance

- Geometry options include: Oval slabs, tubulars, and axials.

Custom Wirewound Resistors

Ohmite's entire line of power resistors can be customized to your specific needs, including mounting options, constructions, and more. Request a quote today!

Read More

Custom Thick Film Resistors

Many of Ohmite's thick film products include many customization options. Ohmite's entire line of power resistors can be customized to your specific needs, including mounting options, constructions, and more.

Read More

Custom Load Banks

Read More

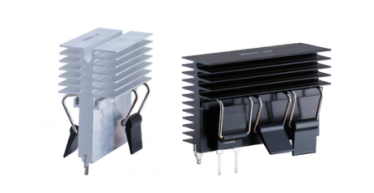

Custom Heatsinks

Ohmite offers heatsinks that include Aluminum Alloy 6063-T5 or equivalent materials and are RoHS-compliant for all your needs. Call now and request a quote today!

Read More

Custom Rheostats

Ohmite Rheostats carry a UL rating with 11 standard sizes allowing many variations and also offers full custom solutions. Call now and request a quote today!

Read More

Customization Options for Standard Parts

Ohmite's catalog contains thousands of individual part numbers, but that isn't all we can do. Ohmite's entire line of resistors, heatsinks can be customized. Call now!

Read More

Ohmite Engineering Team

Ohmite employees a large team of engineers to design and improve our standard and custom resistive and thermal technologies.

Read More

Custom Thick Film Heaters

Read More

Custom Resistor Assembly

Custom assemblies provide the flexibility of high energy dissipation, while saving space, time and money. Our products can be arranged in several layouts to expand your system functionality.

Read More

Custom Power Resistors

Ohmite has a part series for many applications. High power, high current, high voltage, surge, and current sense are among the most popular. Ohmite's entire line of power resistors can be customized to your specific needs, including mounting options, constructions, and more.

Read More