Ceramic Composition Resistors

Ohmite offers ceramic power resistors in multiple material types. These ceramic-based resistors can range from, 1/2 watt to a 1000 watts in a single component, and are compatible with a wide array of end products, including rail charging stations, switchgear, motor controls, defibrillators, accelerators, circuit breakers, high voltage power supplies, etc.

Non-inductive ceramic composition resistors provide several benefits compared to other non-inductive wirewound, film, and composition resistors. This is due to the lack of film or wire that can potentially malfunction. Ohmite's ceramic resistors are also chemically inert and thermally stable, assuring all users that this product line is safe and durable.

OC Series

The OC series of resistors can often replace carbon and ceramic composition resistors which can be difficult to source or carry prolonged lead times.

Read More

Tubular Ceramic Resistors

Ohmite's non-inductive, high voltage, high power tubular resistors are available in a wide range of standard sizes, ceramic materials terminations and mounting hardware.

Read More

Axial Ceramic Resistors

Non-inductive, high voltage, high-energy, high power axial-leaded resistors providing excellent performance where high peak power or high-energy pulses must be handled in a small size.

Read More

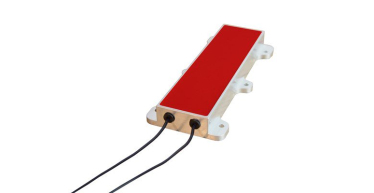

Slab Ceramic Resistors

Ohmite's 500 Series Non-Inductive Bulk Ceramic Slab Resistors provide high power and energy dissipation in a compact size and are available in different materials to meet your needs.

Read More

Disk and Washer Resistors

High-energy resistors in solid disk and washer styles from 1.60 to 5.90 inches (40.6 to 150 mm) in diameter.

Read More

Water-Cooled Resistors

Ohmite's direct and indirect water-cooled non-inductive resistors are capable of dissipating more power in a smaller package than water-cooled resistors previously available in the market.

Read More

Metallic Load Bank Resistors

Metallic load bank resistors are typically used for high power load testing of emergency power systems including generators, uninterruptible power supplies, turbines, battery systems and dynamic braking power dissipation for generators and large motors.

Read More

Custom Resistor Assemblies

Custom power resistor assemblies provide the flexibility of high energy dissipation while saving space, time and money.

Read More

Encapsulated Resistors

Ohmite offers the design and material expertise to meet your specific resistor package requirements.

Read MoreWhy Should You Partner with Ohmite?

Stability: Leader in Resistive Technology for 100 Years

Ohmite has manufactured resistors for 100 years! We've demonstrated a proven track record of creating quality resistive products for high power, high energy, and high voltage applications.

Flexibility: We are Experienced Problem Solvers

Whether it's our expansive catalog of standard parts, our many customization options for each series, or our ability to develop brand new products based on customer designs, Ohmite is dedicated to solving engineers' problems.

Accessibility: We're Here to Help as Trustworthy Partner

Ohmite's team of engineers are available to explain our technology, answer your questions, and consult on important design decisions to help make our customers' lives easier.